68% of organizations have experienced data leakage incidents related to employees sharing sensitive information with AI tools (Metomic, 2025 State of Data Security Report). Of the 13% of organizations that reported breaches involving AI models, 97% lacked proper AI access controls (IBM, Cost of a Data Breach Report 2025).

Companies are adopting AI faster than they can secure it. If you work in operations, support, revenue, or deal desk, this gap between deployment speed and governance readiness is your problem right now, whether your title says "security" or not.

The three fears behind corporate AI resistance

Fear 1: "Where does our data go?"

When employees use free-tier AI tools, their inputs can train the model by default. That is the default setting on consumer ChatGPT, consumer Claude on Free, Pro, and Max plans (as of August 2025), and consumer Gemini (as of March 2026). Once proprietary data enters a public training pipeline, no one can retrieve, track, or delete it.

64% of professionals worry about inadvertently sharing sensitive data publicly or with competitors through AI tools (Cisco, 2025 Data Privacy Benchmark Study). And 73.8% of ChatGPT accounts in the workplace are personal, non-corporate accounts that bypass every enterprise protection the company has set up (Cyberhaven, Q2 2024 AI Adoption and Risk Report).

The volume of corporate data fed into AI tools increased 485% between March 2023 and March 2024. Over a quarter of it (27.4%) qualified as sensitive (Cyberhaven, Q2 2024 AI Adoption and Risk Report).

Fear 2: "Are we breaking the law?"

The EU AI Act's prohibited practices provisions took effect February 2, 2025, with penalties up to 35 million euros or 7% of global turnover. Italy's Garante fined OpenAI 15 million euros in December 2024, the first GDPR penalty targeting a generative AI company, though a Rome court annulled the fine on March 18, 2026.

In the U.S., 38 states adopted roughly 100 AI-related measures in 2025 (National Conference of State Legislatures). Colorado's AI Act (SB 24-205) takes effect June 30, 2026. The U.S. Treasury released a Financial Services AI Risk Management Framework in February 2026 with 230 control objectives across 500+ pages.

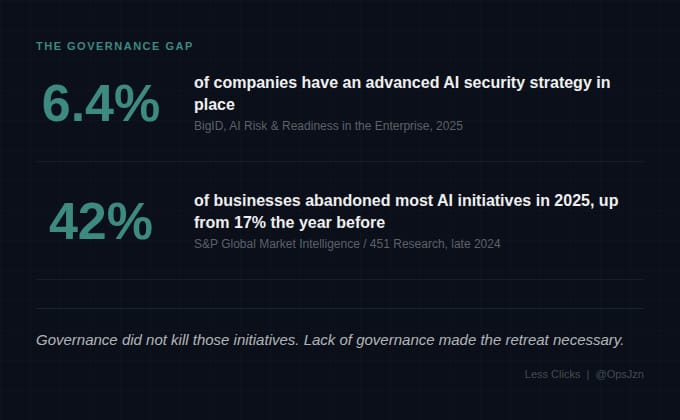

Here is what that gap looks like: only 6.4% of companies have an advanced AI security strategy (BigID, AI Risk & Readiness in the Enterprise: 2025 Report). 42% abandoned most AI initiatives in 2025, up from 17% the prior year (S&P Global Market Intelligence, late 2024). Governance did not kill those initiatives. Lack of governance made the retreat necessary.

Fear 3: "What about the stuff we can't see?"

Shadow AI is the most underestimated risk vector in enterprise AI adoption.

50% use non-company-issued AI tools, based on a survey of 6,000 knowledge workers across the U.S., U.K., and Germany (Software AG, "Chasing Shadows" report, October 2024). 46% said they would refuse to give them up even if their company banned them outright.

Security leaders are not immune. 81% of employees admitted to using unapproved AI tools, and security leaders reported even higher usage rates (UpGuard, State of Shadow AI report, November 2025).

Shadow AI now accounts for 20% of all enterprise breaches. Organizations with high shadow AI usage pay an average of $670,000 more per breach ($4.74M vs. $4.07M). Shadow AI breaches disproportionately compromise customer PII (65% vs. 53% average) and intellectual property (40% vs. 33% average) (IBM, Cost of a Data Breach Report 2025).

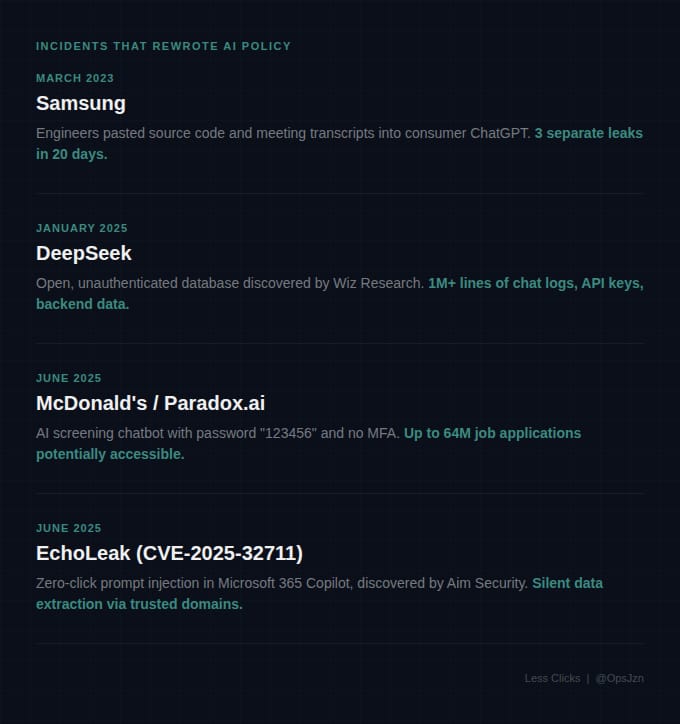

Real incidents that rewrote corporate AI policy

Samsung, March 2023. Within 20 days of allowing engineers to use ChatGPT, employees pasted proprietary source code, equipment diagnostics, and confidential meeting transcripts into the consumer tool. Samsung responded with a company-wide ban.

DeepSeek, January 2025. Wiz Research discovered a completely open, unauthenticated database containing over 1 million lines of log streams: chat histories in plaintext, API keys, backend details. The U.S. Navy and Pentagon subsequently banned DeepSeek.

McDonald's/Paradox.ai, June 2025. An AI chatbot screening franchise applicants used a test admin account with the password "123456." No MFA. Abandoned since 2019. An API vulnerability made up to 64 million job applications potentially accessible. The largest known AI chatbot data exposure to date.

EchoLeak, June 2025. Aim Security discovered the first known zero-click prompt injection in a production AI system, targeting Microsoft 365 Copilot (CVE-2025-32711). An attacker sends a business email with hidden instructions. When you later ask Copilot anything, it retrieves the malicious email and silently extracts data from OneDrive, SharePoint, Teams, and Outlook through trusted Microsoft domains. No clicks required. No alerts triggered.

Notice the pattern. These are not sophisticated attacks. They are mundane infrastructure problems: default passwords, open databases, missing access controls. AI amplifies the blast radius of basic security failures.

What changes between consumer and enterprise AI tiers

Every major AI vendor commits to not training on enterprise or API data by default. Consumer tiers operate under different rules.

OpenAI's consumer ChatGPT uses your data for training by default. Anthropic announced in August 2025 that Claude users on Free, Pro, and Max plans would see a pre-checked training toggle (as of March 2026). Google's consumer Gemini sends conversations to human reviewers by default, while Vertex AI prohibits training on customer data without permission. (Verify each vendor's current policy before making decisions.)

This matters because 73.8% of ChatGPT accounts in the workplace are personal, non-corporate accounts (Cyberhaven, Q2 2024 AI Adoption and Risk Report). Your employees are operating under consumer data policies while handling your company's information.

The key differences at enterprise tiers (as of March 2026): Enterprise and API tiers do not train on your data. Consumer tiers do by default. The standard retention default is 30 days for abuse monitoring. Zero data retention requires explicit enterprise agreements. AWS Bedrock stands apart: it stores no prompts or completions by default, and model providers have zero access to customer data.

All major vendors hold SOC 2 Type II and ISO 27001. The emerging differentiator is ISO/IEC 42001:2023, the first certifiable AI management system standard. For on-premises deployment, Cohere and Dell announced a partnership in May 2025 offering Cohere North for deployment on PowerEdge servers (as of March 2026). Check each vendor's current compliance page before making procurement decisions.

The gap between what your employees are using and what your contracts actually cover is where the exposure lives. Close it at the tier level before you try to close it with policy.

How to frame AI governance for skeptical leadership

Do not lead with productivity gains. Lead with this:

Your team is already using AI. Half your knowledge workers are using unapproved tools. 46% said they would not stop even if you told them to.

The question is not "should we adopt AI?" The question is "do we govern it, or do we pretend it is not happening?"

Governance is harm reduction, not restriction. The organizations succeeding are not the ones with the strictest bans. They are the ones providing sanctioned enterprise tools that are easier to use than the shadow alternatives.

Where to start if you have nothing in place

You do not need a 500-page framework. You need five things done in the next 30 days.

Audit what your team is actually using. Do not send a survey. Surveys get aspirational answers. Pull your network logs or ask your IT team for a SaaS usage report. Look for ChatGPT, Claude, Gemini, Perplexity, and any AI tool traffic you do not recognize. Then ask your team casually: "What are you using AI for this week?" The answers will tell you more than any audit report.

Classify your data before you write a single policy. Tier 1: safe for consumer AI (public knowledge base articles, formatting tasks). Tier 2: requires enterprise tier (customer names, deal values, performance data). Tier 3: never touches an LLM (SSNs, payment info, regulated health data, NDA-covered content). Tape this next to your team's monitors.

Read the terms of service, not the marketing page. For every AI tool your team uses, check three things: does the consumer tier train on your inputs by default, what is the data retention window, and what certifications does the vendor hold at the tier you are actually paying for. Add "verified as of [date]" because these policies change quarterly.

Make the approved tool easier to access than the unapproved one. If your team needs to submit an IT ticket and wait three days for a Claude Team seat, they will keep using personal accounts. Match or beat the friction of the free version, or you lose.

Set a calendar reminder for 90 days from now. When that reminder fires, re-run the network audit. Check whether any vendor policies changed. Review any incidents your team flagged. Governance is not a document you write once. It is a loop you run.

Free AI acceptable use policy templates exist from ISACA, SHRM, FRSecure, and FairNow. Download one. Adapt it. Ship it. A basic policy that exists beats a perfect policy still in draft. For a comprehensive framework, start with NIST's AI Risk Management Framework: Govern, Map, Measure, Manage.

The gap between AI adoption and AI governance is where the real risk lives. But it is also where ops teams that get this right early create the most value for their organizations.

Sources

Security & Breach Data: IBM, Cost of a Data Breach Report 2025 · Metomic, 2025 State of Data Security Report · BigID, AI Risk & Readiness in the Enterprise: 2025 Report · Cisco, 2025 Data Privacy Benchmark Study · Cyberhaven, Q2 2024 AI Adoption and Risk Report · UpGuard, State of Shadow AI Report, November 2025

AI Governance & Readiness: S&P Global Market Intelligence / 451 Research, Voice of the Enterprise: AI & ML Use Cases 2025 · NIST, AI Risk Management Framework (AI RMF 1.0) · ISACA, AI Governance Frameworks and Templates

Regulatory & Enforcement: Italian Garante per la Protezione dei Dati Personali, OpenAI GDPR Penalty, December 2024 (annulled March 2026) · EU AI Act, Official Text and Enforcement Timeline · Colorado AI Act, SB 24-205 and SB 25B-004 · U.S. Treasury, Financial Services AI Risk Management Framework, February 2026 · National Conference of State Legislatures, 2025 AI Legislation Summary

Shadow AI & Workforce: Software AG, "Chasing Shadows" Report, October 2024

Vulnerability Research: Aim Security (Aim Labs), EchoLeak Disclosure, June 2025 · Wiz Research, DeepSeek Database Exposure, January 2025

Note: Vendor-specific claims about training policies, certifications, and enterprise features were verified as of March 2026. Verify current policies at each vendor's data usage and compliance pages before making procurement decisions.

Until next week,

@OpsJzn

AI should mean fewer steps, not more tools.